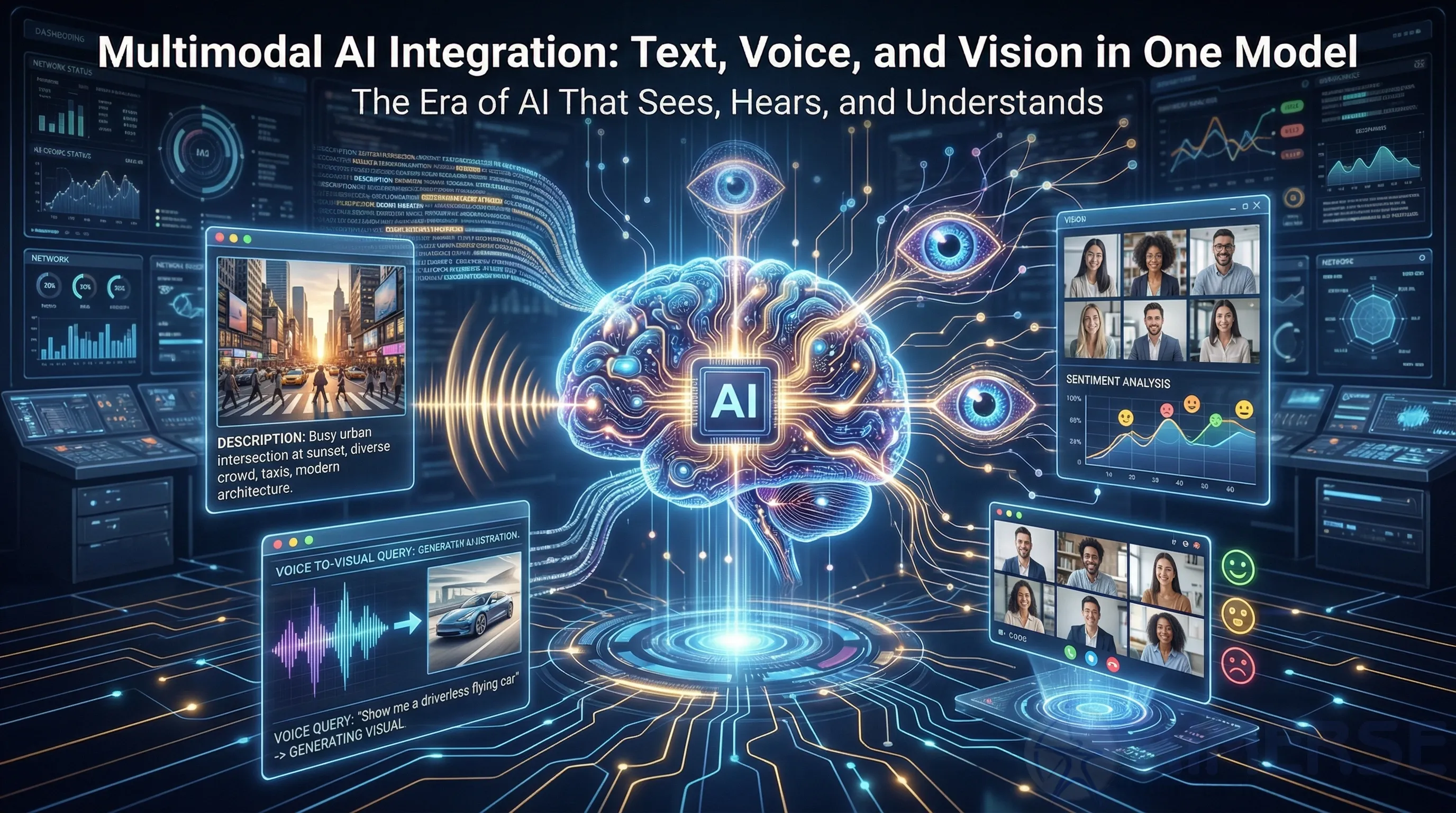

Multimodal AI Integration: Text, Voice, and Vision in One Model

In 2026, multimodal AI combines text, audio, and images simultaneously. This allows enterprise apps that feel like a single, integrated system—think of advanced search and better customer insights.

Models like GPT-4o and Gemini 2.0 process different inputs using unified transformers, achieving over 90% accuracy on cross-modal tasks such as image captioning and voice-image search. Businesses use this to cut days off insight time—up to 40% faster—by combining CRM data with audio from calls and user images. A major catalyst is CLIP-style encoders that connect multiple modalities without starting from scratch every time.

How it works

- Fusion layers: Cross-attention combines embeddings of different modalities (vision transformers with BERT, say).

- Adapters: Fine-tuning large models for particular domains.

- Outputs: Image-to-text or text-to-image synthesis, simple to integrate using Node.js APIs.

It plays well with React.js for interactive UIs and with Django on the server side.

Industry applications

- Customer service: Video analysis for sentiment and visual analysis with auto-escalation pipelines integrated into Laravel.

- Search engines: Searches such as “Find red dress in video” yield accurate results.

- Content moderation: Hate speech detection in memes by combining text and image analysis.

Challenges and solutions

- Alignment of data across various modalities was a challenge but is now assisted by the use of synthetic data sets.

- Compute expenses mitigated by edge computing.

Deployment strategy

- Select a foundation model (such as LLaVA for open-source applications).

- Optimize it for API-readiness with Spring Boot for large-scale applications.

- Implement the frontend with React.js for live applications.

- Implement a hybrid cloud/edge architecture to maintain latency below 200 ms.

Hugging Face APIs are ready to accelerate adoption by 2026.

Conclusion

The inclusion of multimodal AI capabilities means integrating the frontend, real-time data, and vision components into a single, mighty instrument. This enhances decision-making capabilities by combining human-like perception with enterprise-class execution, providing a sustainable competitive advantage.